Over 844,000 websites have published an llms.txt file. Not one of the major AI providers has confirmed their systems actually read it.

So why on earth would you publish one?

That is the honest question sitting underneath a lot of developer conversations right now. Your agency or in-house developer has mentioned it. An SEO blog has made it sound urgent. The guidance you have found ranges from “essential for AI search” to “complete waste of time”, with nothing sensible in the middle written for someone who actually has a business to run.

This article sits in that middle. It explains what llms.txt actually is, how it compares to robots.txt and sitemap.xml, what it does today including the uncomfortable part, and the seven rules to follow before you publish one or decide not to bother. By the end, you will either have a clear checklist to hand to your developer, or a very defensible reason to wait.

What llms.txt is, in plain English

An llms.txt file is a plain-text document you publish at the root of your website that summarises what your site is about and links to your most important pages. The summary is written in markdown and aimed at AI tools that crawl or query your site, giving them a shorter, curated route to your best content rather than making them work through everything you have ever published.

Think of it as a shop assistant standing at the door saying: “We provide accounting software for small businesses. Our main pages are these five. Here is what each one covers.” It does not do the job a search engine index does. It just makes the overview faster and cleaner.

The proposal came from Jeremy Howard, the AI researcher and Fastai founder, in September 2024. He published the original specification at llmstxt.org, where it remains the canonical source. The idea spread quickly through tech and SEO communities, partly because it was simple and partly because nobody could quite agree on whether it would matter.

In practice, the file contains a short paragraph describing the site and a series of markdown links to its most relevant pages, often grouped by section. Anthropic’s own documentation site publishes one. So does Cloudflare, Stripe, and Mintlify. Each one is essentially a human-readable guide to the site’s most important content, written for a reader that processes text at machine speed.

How llms.txt differs from robots.txt and sitemap.xml

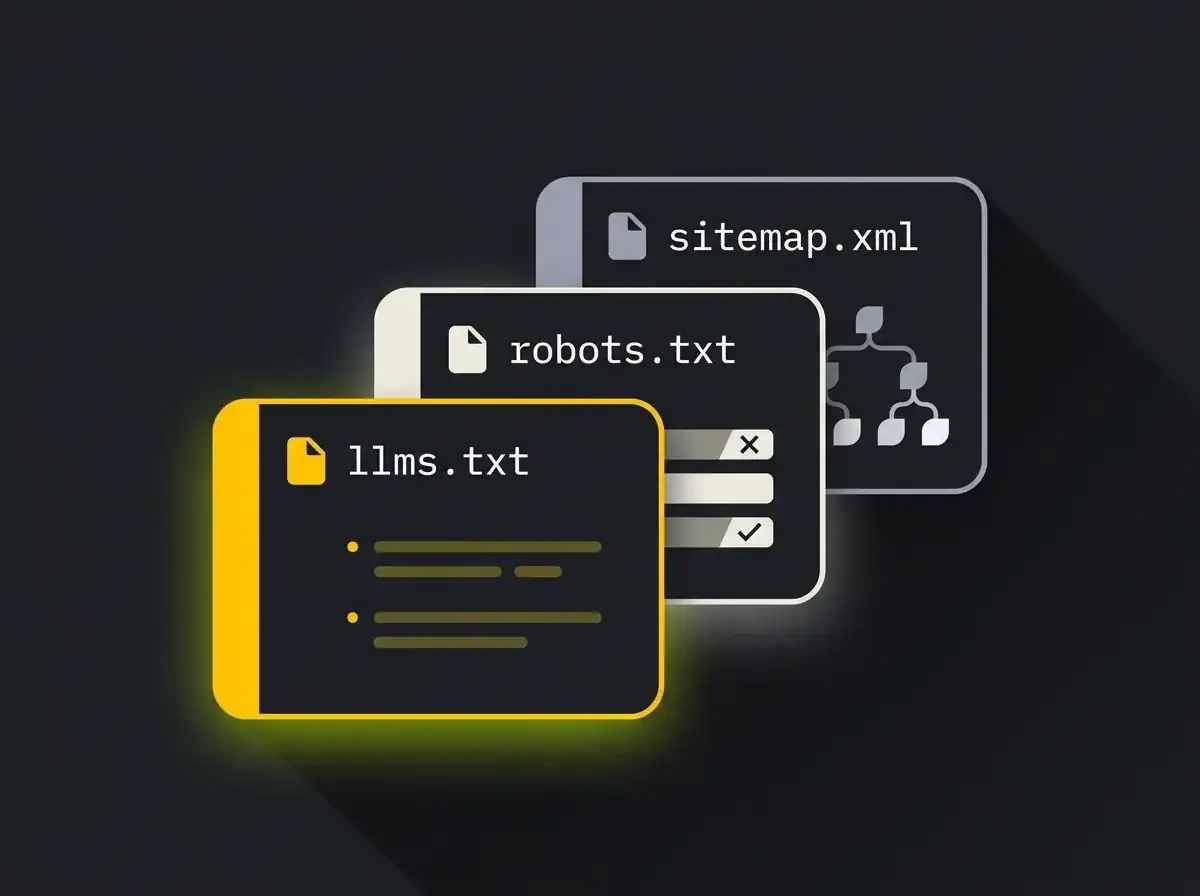

These three files serve three entirely different purposes and talk to three different audiences. Getting this clear is useful, because a significant amount of confusion around llms.txt comes from conflating them.

robots.txt is a set of instructions for crawlers, telling them which pages they are and are not allowed to access. It has existed since 1994. Google, Bing, and most major web crawlers read it. If a page is blocked in robots.txt, it should not be indexed.

sitemap.xml is a map of your site’s structure. It tells search engines which pages exist, when they were last updated, and how often they change. Search engines use it to discover and prioritise pages for indexing. It is comprehensive by design, because the goal is complete coverage.

llms.txt is neither of those things. It does not block content. It does not replace the sitemap. It is a curated, human-readable summary aimed at AI tools that might benefit from a simpler overview rather than a full index. Additive, not a replacement.

The practical distinction: robots.txt controls access, sitemap.xml aids discovery, and llms.txt helps interpretation. If you are looking at the broader picture of AI search optimisation, all three sit alongside each other rather than competing.

What llms.txt actually does today

Here is the part most blogs skip over.

No major AI provider has officially confirmed that their systems retrieve and use llms.txt content. Not OpenAI, not Google, not Anthropic. Google’s John Mueller stated publicly that no AI search system currently uses llms.txt, a position he has expressed across Reddit and Bluesky threads. That is not a fringe opinion. It is, as of 2025, the honest state of play.

So why are 844,000 websites, according to BuiltWith’s adoption tracker, publishing the file anyway?

Three reasons. First, future-proofing. If llms.txt does become a standard that AI providers formally adopt, sites with a clean and accurate file from the start will have a head start over those scrambling to catch up. Second, brand signalling. In B2B and professional services, being seen to operate at the frontier of AI matters to prospects assessing your credibility. A published llms.txt is a visible, verifiable signal that you are paying attention. Third, controlled framing. If an AI tool does crawl your site and encounters your llms.txt, you have influenced the most likely summary it will construct. That framing carries weight now that AI traffic converts at 5x the rate of traditional search: the visitors who arrive from AI platforms arrive already further along in their thinking.

None of this makes llms.txt a magic solution. But it does make it a reasonable thing to publish, provided you do it correctly.

The 7 rules to follow before you publish your llms.txt

Publishing an llms.txt takes twenty minutes. Publishing a good one takes a bit more thought. These seven rules separate the files that do their job from the ones that sit there creating noise.

Rule 1: treat it as public marketing copy, not technical configuration

Everything in your llms.txt is readable by humans, search engines, and AI tools alike. It is not a config file tucked away in a server directory. It is a published, indexable document that describes your business to anyone who visits that URL.

Write it as you would write a pitch. Clear, specific, focused on the right audience. If you would be uncomfortable with that content appearing on a web page, you should be equally uncomfortable with it sitting in an llms.txt file.

Rule 2: do not duplicate your sitemap. Curate

The point of llms.txt is the opposite of a sitemap. A sitemap aims to be comprehensive. llms.txt should be selective. For most business sites, five to twenty links covering your most important content is appropriate. If you are listing every blog post you have ever published, you have misunderstood the format.

Ask yourself: if an AI tool read only these pages, would it form an accurate and positive picture of what you do and who you serve? If yes, the list is right. If not, revise it before publishing.

Rule 3: lead with a one-paragraph summary of who you are

The top of the file should be a plain-English description of your business, written for someone who knows nothing about you. AI summarisers will lift this paragraph when constructing answers that reference your brand. That gives it more weight than almost anything else in the file.

Keep it to three to five sentences. Include what you do, who you do it for, and what makes you different. Treat it as your best About Us sentence, not a keyword-stuffed description.

Rule 4: link to the canonical, current version of each page

If a page has been redirected, your llms.txt entry should point to the destination URL, not the redirect. Linking to a redirect creates unnecessary friction for any tool parsing the file, and it is a credibility gap you can close in ten minutes.

Before publishing, check that each URL in the file resolves cleanly with a 200 status. This is a basic step, but it prevents an embarrassing error sitting permanently at the root of your domain.

Rule 5: do not use llms.txt to block AI crawling

That is what robots.txt is for. Some businesses have started adding restrictions to their llms.txt files, which creates a confusing contradiction: robots.txt might allow a page while the llms.txt tries to discourage access to it. These two files serve different purposes and should not be mixed.

If you want to prevent AI systems from accessing or training on specific content, your robots.txt is the correct tool for that. Your llms.txt should focus only on what you want AI tools to find and understand.

Rule 6: validate before publishing

A malformed llms.txt file is worse than no file at all. It signals to anyone checking that your site is poorly maintained. Run the file through a validator before it goes live. Tools like llms-txt-hub offer basic validation, and several others have emerged as the format gained traction.

Check that the markdown is clean, the links all resolve, the formatting is consistent, and the file is accessible at yourdomain.com/llms.txt without any authentication prompt or redirect loop.

Rule 7: decide your update cadence and stick to it

An llms.txt file that was accurate in late 2024 and has not been touched since is a liability. If your service offer has changed, if you have published important new content, or if pages have been restructured, the file needs updating to match.

A stale file can actively mislead an AI tool about what you currently offer. Worse, it can direct attention towards pages that no longer exist or no longer represent your business. Build the review into your content workflow rather than leaving it as a separate task that gets forgotten.

If you would rather get it set up properly without working through this alone, that is exactly what our AI search service covers.

How to generate your llms.txt

The simplest route for most WordPress sites is the Yoast SEO plugin, which now generates an llms.txt file automatically. Most WordPress businesses can have a basic version live within minutes of updating the plugin. It is a reasonable starting point, though the auto-generated version will need reviewing and curating before it genuinely reflects your priorities rather than just your site structure.

For documentation sites, Mintlify builds llms.txt generation directly into its platform. If your product runs on Mintlify docs, you may already have a file published without realising it.

For other setups, the generator at llmstxt.org is a useful starting point, and Firecrawl can crawl your site and produce a draft from the content it finds.

The critical caveat applies to all of them. Any auto-generator produces a starting point based on your site’s current structure. It will not know which pages are your most strategically important, which content represents your best work, or how you want your business framed to an AI audience. The curation step is always yours, and it is the step most people skip in a hurry to get something published.

Should you publish one

The honest answer is: probably yes, but only if you have the time to do it properly.

If your site has a genuine content library, if you operate in a sector where AI search already influences buyer behaviour (B2B professional services, agencies, healthcare, financial services, SaaS), or if you want to stay ahead of a standard that may solidify over the next couple of years, a well-crafted llms.txt is a sensible investment of a few hours.

If your site is small, your team is stretched, or you are only doing it because someone told you it was mandatory, hold off until you can do it well. A half-finished file published in a hurry will need fixing later, and the maintenance overhead adds up faster than people expect.

The real question is not “should we publish an llms.txt”. It is “do we have the bandwidth to publish a good one and keep it accurate over time”. If yes, the rules above are your checklist.

What sits beyond llms.txt

llms.txt is one small lever in a much larger question: are AI tools finding, understanding, and recommending your business?

The file addresses the “understanding” part in a narrow way. The “finding” and “recommending” parts are a much bigger body of work. That work covers how AI tools decide which sources to cite, how they choose which businesses to name when answering recommendation-style queries in your category, and how your content needs to be structured to be quotable, specific, and grounded in named expertise. None of that is solved by a single file at the root of your domain.

If you want a clear picture of where your brand currently stands in ChatGPT, Claude, and Perplexity before deciding where to invest, the AI Visibility Audit is the fastest way to find out.

Free resource: AI Visibility Audit

A structured audit of how your brand appears across the major AI search platforms. We check whether you are being recommended, what is being said about you, and where the gaps are. The natural next step after working through this checklist and wanting to understand the bigger picture before deciding what to invest in next.

FAQ

What is an llms.txt file?

An llms.txt file is a plain-text document, written in markdown, published at the root of your website. It contains a short description of your site and links to your most important pages, intended to give AI tools a curated summary of your content rather than relying on them to discover and index everything independently.

What is the difference between robots.txt and llms.txt?

robots.txt controls which pages crawlers are allowed to access. It has existed since the 1990s and is respected by all major search engines and AI crawlers. llms.txt is different in purpose: it does not block or grant access to anything. It is a voluntary summary that provides context and curated links to help AI tools understand your site’s most important content. The two files serve entirely different roles and should not be confused with each other.

Do AI crawlers actually use llms.txt?

Not confirmed, as of 2025. No major AI provider, including OpenAI, Google, or Anthropic, has officially stated that their systems retrieve and act on llms.txt files. Google’s John Mueller has noted publicly that AI search systems do not currently rely on the file. As Search Engine Land’s overview of the proposal noted, the format is a proposed standard gaining broad adoption ahead of any formal support from the major platforms.

Where do I put my llms.txt file on my website?

The file should live at the root of your domain, accessible at yourdomain.com/llms.txt. It should be publicly accessible without any authentication and return a 200 HTTP status. If it redirects or requires a login to view, any tool trying to read it will either fail silently or encounter the wrong content entirely.