An AI search visibility tool doesn't measure how often you appear in AI search. It measures how often you appear in a sample of prompts that a vendor chose. Those are very different things.

That's worth sitting with, particularly if you have a meeting coming up where someone asks whether you show up in ChatGPT. Because the answer most tools give you is not a reading of reality. It's a reading of a controlled simulation of reality that the vendor built, calibrated, and owns entirely.

None of that makes these tools useless. Some of them are genuinely good. But understanding what they can and cannot measure is what separates a report that survives client scrutiny from one that quietly dies the moment someone asks a follow-up question. This is a tool-agnostic read on how the category works, where it falls short, and how to fold AI visibility into reporting that a senior buyer will actually trust. There is no winner declared here. But there is a clearer picture of what you're buying.

What an AI search visibility tool actually does

The simplest definition: an AI search visibility tool repeatedly queries AI platforms with a fixed set of prompts, checks whether your brand or domain appears in the responses, and reports that data over time.

That's the whole mechanic. Everything else is a feature layer on top of it.

The platforms typically tracked include ChatGPT (GPT-4 and above), Google AI Overviews and AI Mode, Perplexity, Claude, Gemini, and Microsoft Copilot. Not all tools track all of these. Most started with ChatGPT and Perplexity and have been adding platforms as the market matures. Google's own documentation on AI Overviews explains how its AI responses draw from indexed web content, which matters when you're trying to understand why citation patterns differ so much across platforms.

Three distinct types of visibility get reported, and the distinction matters. Brand mentions are instances where your company or product name appears in the AI response, even if your website isn't cited. Citations are instances where the AI references your URL as a source. Source attribution is a subset of citation where the AI explicitly names you as the authority behind a specific claim. Most tools collapse all three into a single visibility score. The better ones separate them. A brand mention with no citation might mean you're being talked about because someone else wrote about you, not because you're a trusted source in the AI's reasoning. That is not the same thing.

Why almost every roundup ranks the same tool first

Before going further, a brief note on the state of this topic's SERP. Every substantive roundup you'll find for "AI search visibility tool" was written by a vendor in the category, a publication running affiliate advertising, or a parasite-host content operation earning commission on signups.

Airefs ranks Airefs first. Zerply ranks Zerply first. Trysight ranks Trysight first. The paid placements on student newspaper domains aren't editorial assessments. They are advertorials in editorial formatting.

This matters because you're probably about to spend real money based on one of these lists, or you already have. The vendor-published comparison is the dominant format in this category, and an honest agency-side read on what these tools actually do is genuinely rare right now. Understanding this conflict is the starting point for making a sensible buying decision.

For context on where AI visibility tracking fits within a broader programme, the search visibility and traffic hub gives a useful strategic frame before you commit to any specific tool or metric.

The three things these tools all measure

Most AI search visibility tools converge on three metrics. They differ in execution and accuracy, but this is the common ground across the category.

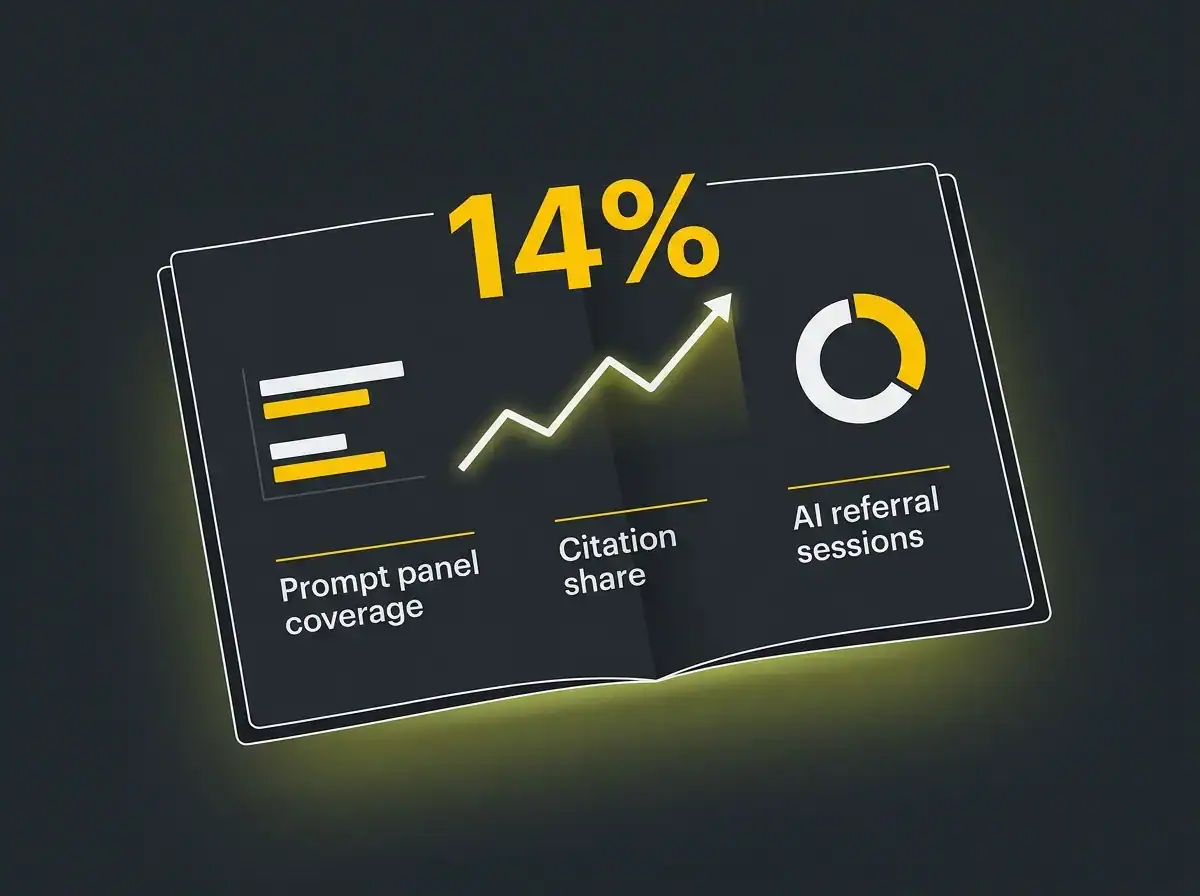

Share of voice across a fixed prompt panel. This is the headline number in almost every dashboard. You're assigned a percentage share of mentions or citations across a set of prompts the vendor defined. A share of 14 percent means you appeared in responses to 14 percent of the prompts in the panel. That sounds clean. It isn't, quite. Share of voice moves week to week partly because of what you're doing, and partly because AI models are updated and the vendor's prompt panel is refreshed. A 30 percent swing over a fortnight can reflect genuine change, or it can reflect that the vendor swapped 50 prompts in their panel. The better tools log panel changes in their changelog. Most don't, which makes trend analysis harder than it should be.

Citation tracking against named source URLs. The tool checks whether any URL from your domain appears as a cited source in AI responses. This is more stable than share of voice because it's binary: either the URL is there or it isn't. It also has a clearer connection to decisions you can act on. If a specific page is being cited consistently, you know that page is doing something right. If nothing is being cited, you have a clear target.

Competitive benchmarking against named rivals. You enter a list of competitor domains, and the tool reports their visibility metrics alongside yours. This is the comparison view your client or board will most likely ask for first. It's also the easiest to misread, because if your competitor is using a different tool, their score is calculated against a different prompt panel. You cannot sensibly compare a Profound share-of-voice score with an Otterly share-of-voice score and draw conclusions. They are not on the same scale.

The two things they almost all miss

Here's where the honest assessment gets uncomfortable. Two gaps run through almost every tool in the category, and neither gets discussed in any vendor roundup.

The first is prompt relevance. Most tools ship with a default prompt panel built from broad category queries: "what is the best CRM for small businesses", "recommend an SEO agency in London". These are sensible starting points. They are not your buyers' actual questions. Your buyers are asking things like "which software handles multi-entity accounts payable in the UK" or "which agency manages AI search for professional services firms in Birmingham". If your tool is measuring a panel of generic prompts, you're getting visibility data that describes a market you may not be in. For specialist businesses, the gap between a vendor's default panel and real buyer language can be enormous.

The second is revenue attribution. None of the pure-play AI search visibility tools in this category offer meaningful pipeline attribution for AI-sourced visits. They can tell you an AI response cited your pricing page. They cannot tell you whether the person who read that response converted into a lead. Given that AI traffic converts at around five times the rate of conventional organic visits, this gap is not trivial. A tool that shows you high citation share but can't connect it to pipeline is measuring an output, not an outcome.

A few tools are beginning to address attribution by integrating with GA4 referral data, but this is early work. For now, treat attribution as something you build manually, not something any visibility tool handles for you.

The methodology gap most buyers don't ask about

This is the question that should drive your vendor choice, and almost nobody asks it: how does the tool actually send prompts to the AI platforms, and how often?

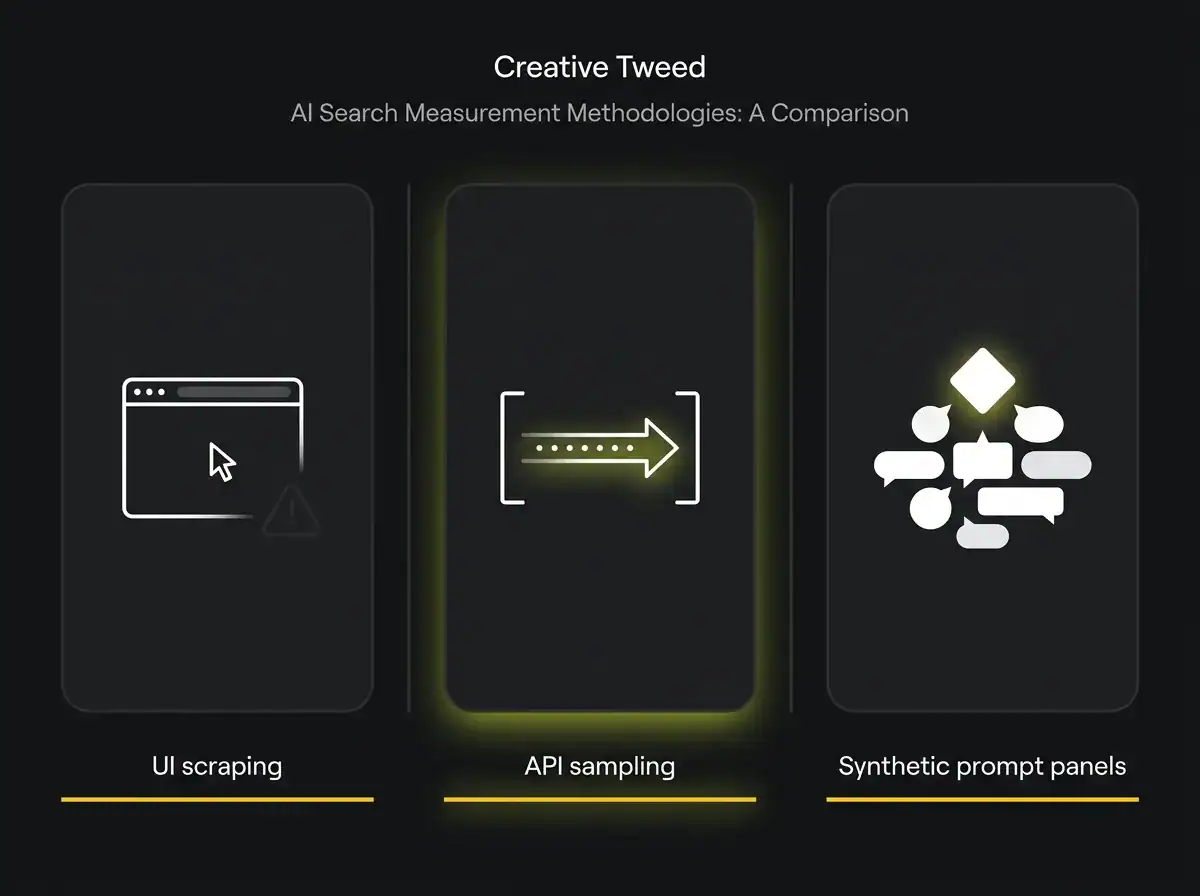

There are three broad approaches in use.

UI scraping is the oldest and cheapest method. The tool opens an automated browser session, types a prompt into the AI platform's web interface, scrapes the response, and stores it. The problems are twofold. The platforms actively detect and block automated sessions, which means results can be unreliable. And the web interface doesn't always produce the same response as a direct API call or a signed-in user session, so you may be measuring a slightly artificial version of what real users see.

API sampling uses the AI platform's official developer API to send prompts programmatically. More reliable than UI scraping, and faster. The catch is that API responses aren't always identical to the consumer product experience. This is particularly relevant for Google AI Overviews, where the consumer and developer surfaces differ.

Synthetic prompt panels are the most sophisticated approach. The tool builds a representative set of prompts for your category, runs them through a combination of API calls and monitored user sessions, and samples responses across multiple time windows to account for model variability. This is the direction the enterprise-tier tools are heading. It's also why they cost meaningfully more.

Two reputable tools can give you different visibility scores for the same brand in the same week because they use different methodologies and different prompt panels. That is not a bug. It's a feature of how early this measurement discipline is. What it means practically: don't switch tools mid-year and compare the numbers as if they sit on the same scale. They don't.

A neutral pass through the named players

The category has grown quickly and the naming conventions are still settling. Here is a vendor-neutral summary, grouped by approach rather than ranked by preference.

AI-search-native platforms. These were built from the ground up to measure AI search visibility, rather than adding an AI module to an existing product.

Profound is the most-funded entrant in the category. (Funding figures in circulation suggest around $58 million across seed, Series A and B rounds, though verify against Crunchbase before repeating this.) It's designed for enterprise and mid-market in-house teams. Strong on competitive benchmarking and citation depth, with some early attribution work underway. Suited to in-house teams at larger brands, with pricing that reflects the positioning.

Peec AI is a European entrant with solid multi-language tracking and good prompt customisation. Worth considering for any UK business with continental distribution. Suited to both in-house and agency.

Otterly is arguably the most accessible starting point for agencies and mid-market in-house teams. Clean dashboard, straightforward prompt management, competitive pricing for what it offers. Not the deepest methodology, but honest about its limits. Suited to agencies and in-house teams that are getting started.

Nightwatch has added an AI visibility module to its existing rank-tracking platform. The benefit is consolidation across traditional and AI search in one dashboard. The limitation is that the AI module is still catching up to the pure-play platforms on prompt panel depth. Best for existing Nightwatch customers who want one tool rather than two.

Airefs, Brandlight, and AthenaHQ round out this group. Airefs has built a detailed feature set and is expanding fast. Brandlight focuses more on brand perception across AI responses than pure citation counting, which is a distinct product angle. AthenaHQ has strong prompt customisation for B2B use cases. All three serve both in-house and agency.

Integrated SEO platforms with AI modules. These tools started in traditional SEO and have added AI visibility tracking.

SE Ranking has released an AI visibility tracker sitting alongside its existing rank tracking and site audit tools. If you're already on SE Ranking, review whether the AI module covers your requirements before buying a separate tool. ZipTie, SE Visible, and Promptwatch follow a similar pattern. Rankability has taken a content-focused approach, connecting AI citation data to content recommendations rather than just reporting visibility scores.

Smaller and newer entrants. Knowatoa, Scrunch, Superlines, and AIclicks are all active in the space. Some are well-funded early-stage; some are bootstrapped. The category is moving fast enough that any characterisation here ages quickly. The most reliable check is a free trial and a direct conversation with their support team about methodology. Ask specifically how they construct the prompt panel and how often they refresh it.

For each group, the standard offering includes a free trial. Use it to test the tool against your own custom prompts, not the default panel. That's the only meaningful evaluation.

How to choose prompts that reflect real demand

This is the single most practical thing you can do to get more value from any tool in the category. Most default prompt panels are too broad for specialist businesses. Fixing this is not complicated.

Start with three sources. First, Search Console. Export your top 50 queries by clicks over the last 90 days. The ones phrased as questions or comparisons are direct candidates for your prompt panel. Second, sales call notes or CRM transcripts. The questions your sales team hears in the first conversation are the exact prompts your buyers are typing into ChatGPT. Third, form data and live chat logs. "How do you compare to X?", "Are you suitable for Y industry?", "Does your service cover Z?" These are prompt seeds you own and your competitors don't.

The goal is a panel of 20 to 40 prompts that a real buyer in your segment would plausibly type. Revisit the panel every quarter as language and intent shift.

UK businesses should include UK-specific phrasings: "AI search agency in the UK" rather than just "AI search agency". The default panels in most tools skew American and generalise terms that UK buyers phrase differently. That gap is consistent enough to be worth actively correcting.

A prompt panel built from real buyer language survives a question from your marketing director. When someone asks which prompts you're tracking, "the ones that came from our sales team's call notes" is a stronger answer than "the ones the tool came with".

How to report this to a board or a client without overclaiming

The most common mistake in AI visibility reporting is leading with metrics that sound impressive but don't survive a follow-up question. Here is a format that holds up.

Three numbers worth reporting. First, prompt panel coverage: the number of prompts in your custom panel where your brand appears at least once per month. This is an absolute number that anyone can understand without background. Second, citation share: the percentage of your tracked prompts where your domain is cited as a source, not just mentioned. This is the metric with the clearest connection to authority-building work you can point to. Third, qualified traffic from AI referrers: sessions landing from ChatGPT.com, Perplexity.ai, claude.ai, and similar, as identified in GA4 source data. This connects the AI visibility work to something the business is already measuring.

Two numbers to avoid. Anything labelled "estimated reach" or "AI impressions" is a synthetic extrapolation. A confident finance director will ask how it was calculated. "The tool estimates it based on model usage patterns" tends to erode trust in everything else on the page. Leave these out until the methodology behind them has been published and independently verified by someone other than the vendor.

A useful illustrative shape: a UK B2B services business running a 30-prompt custom panel over six months showed citation share rising from 8 to 14 percent across ChatGPT and Perplexity, with qualified AI referral traffic growing from 120 to 340 sessions per month over the same period. (This is an illustrative example; replace with real client data if available.) That progression tells a clear story. Citation share rising says authority is building. Traffic rising says it's translating into visits. Together they earn continued budget. Separately, either number is easier to dismiss.

When you present these figures, pre-empt the most likely follow-up: "and here's how we know the prompts we're tracking reflect what our buyers actually ask." That answer is the difference between a report that builds confidence and one that opens a sceptical conversation you weren't ready for.

If you want a structured way to establish this baseline before committing to a tool, the AI Visibility Audit is where we start with most clients. It maps where you currently appear across ChatGPT, Perplexity, Google AI Overviews, and Claude before any subscription is in place, so the tool choice is informed by real data rather than vendor marketing.

When you don't need a tool yet

There's a stage of business where a manual check is more useful than a monthly subscription to anything in the category.

The manual approach: open ChatGPT, Perplexity, and Google in AI Mode. Run 20 prompts from your list. Note which produce responses where you're mentioned, which cite your URL, and which name a competitor instead. Log it in a spreadsheet. Repeat every six weeks. The cost is two hours of time. The output is a directional read on whether you have an AI visibility problem.

This works until two things happen. First, when you need to track more than 30 prompts consistently and the manual process starts missing things. Second, when a client or board requires regular, auditable reporting and a spreadsheet is no longer a credible format. At that point, a tool earns its subscription.

Many businesses that are currently paying for an AI search visibility tool would be better served running the manual process first, using the output to build a proper custom prompt panel, and then starting a tool subscription once they know what to measure. Starting with a tool before you know your prompts produces tidy-looking data that is tracking the wrong things. That's a common and expensive way to end up with a dashboard nobody can act on.

Where this category goes next

The most pressing technical gap is multi-turn conversation tracking. Every tool in the category currently measures single-prompt responses. But buyers increasingly interact with AI platforms in extended conversations: a follow-up question, a refinement, a return to the same thread the next day. How a brand performs in those later turns can differ substantially from how it performs in the opening prompt. No tool measures this reliably yet.

Google AI Mode is the factor most likely to change methodology requirements fastest. AI Mode integrates AI responses directly into the search result page in a way that blurs the distinction between traditional ranking and AI citation. BrightEdge research on AI search frequency has tracked how AI Overviews appearance varies by query type, and those patterns give some indication of how measurement needs will shift as AI Mode reaches wider rollout. Tools that have built their architecture around prompt-based measurement will adapt more easily than those that have bolted AI modules onto traditional rank trackers.

AI citation share also correlates strongly with the quality and authority of individual pages and the domain they sit on. AI platforms don't cite weak content from low-authority domains with any consistency. That means the SEO agency work that builds domain authority and page quality remains the foundation that AI visibility is built on top of. The two disciplines are not independent, and they are converging.

Consolidation among vendors is likely over the next twelve months. Fifteen-plus credible players is too many for the current market size. Expect acquisitions, particularly of the smaller pure-play platforms by established SEO tools looking to round out their AI features. The businesses that build the measurement discipline now will be better positioned when consolidation reshapes the tool landscape, because they'll already know which metrics matter and which ones were just vendor noise.

This is a measurement problem before it is a tool problem

The right AI search visibility tool is the one built around prompts that reflect your actual buyers, with a methodology transparent enough to explain to a finance director, and a reporting structure that connects citation data to something the business is already monitoring.

That narrows the field considerably. Most of the vendors covered above can clear that bar with the right configuration and a custom prompt panel. None of them clear it out of the box.

The mistake most businesses make is treating this as a tool selection problem when it's actually a measurement design problem. Decide what you're trying to measure, build prompts that reflect your buyers, establish a baseline manually, then choose the tool that makes that measurement sustainable at your scale and budget. Not the other way around.

For businesses that want to compress that groundwork and move faster, an AI search agency that has already built the measurement infrastructure across multiple client accounts can reduce the timeline significantly. The approach that works is to start with the audit, build the prompt panel with input from the client's sales team, and run the first manual baseline before any tool is commissioned. The tool follows the method, not the other way around.

The metric matters less than the discipline of measuring it.

Free resource: AI Visibility Audit

Find out where you currently appear across ChatGPT, Perplexity, Google AI Overviews, and Claude. We check against prompts drawn from your buyers' actual language, not a vendor's default panel, and deliver a baseline report you can share with your board or your client in the same week.

Frequently asked questions

What does an AI search visibility tool measure?

An AI search visibility tool measures how often your brand, website, or content appears in responses from AI platforms such as ChatGPT, Google AI Overviews, Perplexity, and Claude. It does this by running a fixed set of prompts through those platforms at regular intervals and recording when your brand is mentioned or your domain is cited as a source. The core metric most tools report is share of voice: the percentage of tracked prompts where you appear, compared to a set of competitors.

Are AI visibility tools accurate?

They are consistent rather than accurate, which is a meaningful distinction. Because each tool uses its own prompt panel and its own methodology, two tools measuring the same brand in the same week will typically produce different scores. The data is most useful as a trend line over time within a single tool, and least useful as an absolute benchmark against a competitor using a different tool. Accuracy improves significantly when you replace the vendor's default prompt panel with prompts drawn from your real buyer behaviour.

How often should you check AI visibility?

For most businesses, monthly is the right cadence for formal reporting, with a manual spot-check every six weeks to catch significant model updates that your tool's next scheduled run might miss. Daily monitoring is available in some platforms but produces variance that is mostly noise at this stage of the category's development. Weekly is sensible if you're running an active content programme and want to track the impact of specific pages quickly.

Do you need a paid tool to track AI visibility?

Not at the early stage. A manual check of 20 prompts across ChatGPT, Perplexity, and Google AI Mode, logged in a spreadsheet and repeated every six weeks, gives you the directional data you need to understand whether you have a visibility problem before spending on a subscription. A paid tool earns its cost when you need consistent tracking across a larger prompt panel, competitive benchmarking, or reporting in a format that a board or client can review at scale.

Which AI search visibility tool is the best?

There is no universal best. The right choice depends on which platforms you need to track, the size of your prompt panel, whether you need multi-language support, and whether you're running in-house or through an agency. Profound and Peec AI are strong for enterprise and mid-market in-house teams. Otterly is often the most practical starting point for agencies. SE Ranking is worth reviewing if you already use it for traditional SEO. The only meaningful evaluation is to trial two or three tools against the same custom prompt panel and compare what they actually return.